The SAFER AI Protocol

Artificial intelligence is rapidly transforming how organizations generate insights, make decisions, and operate across industries. While AI systems offer significant capabilities, they also introduce risks, including inaccurate outputs, bias, and overreliance on automated responses.

Despite these risks, responsibility for evaluating and acting on AI-generated outputs remains with humans.

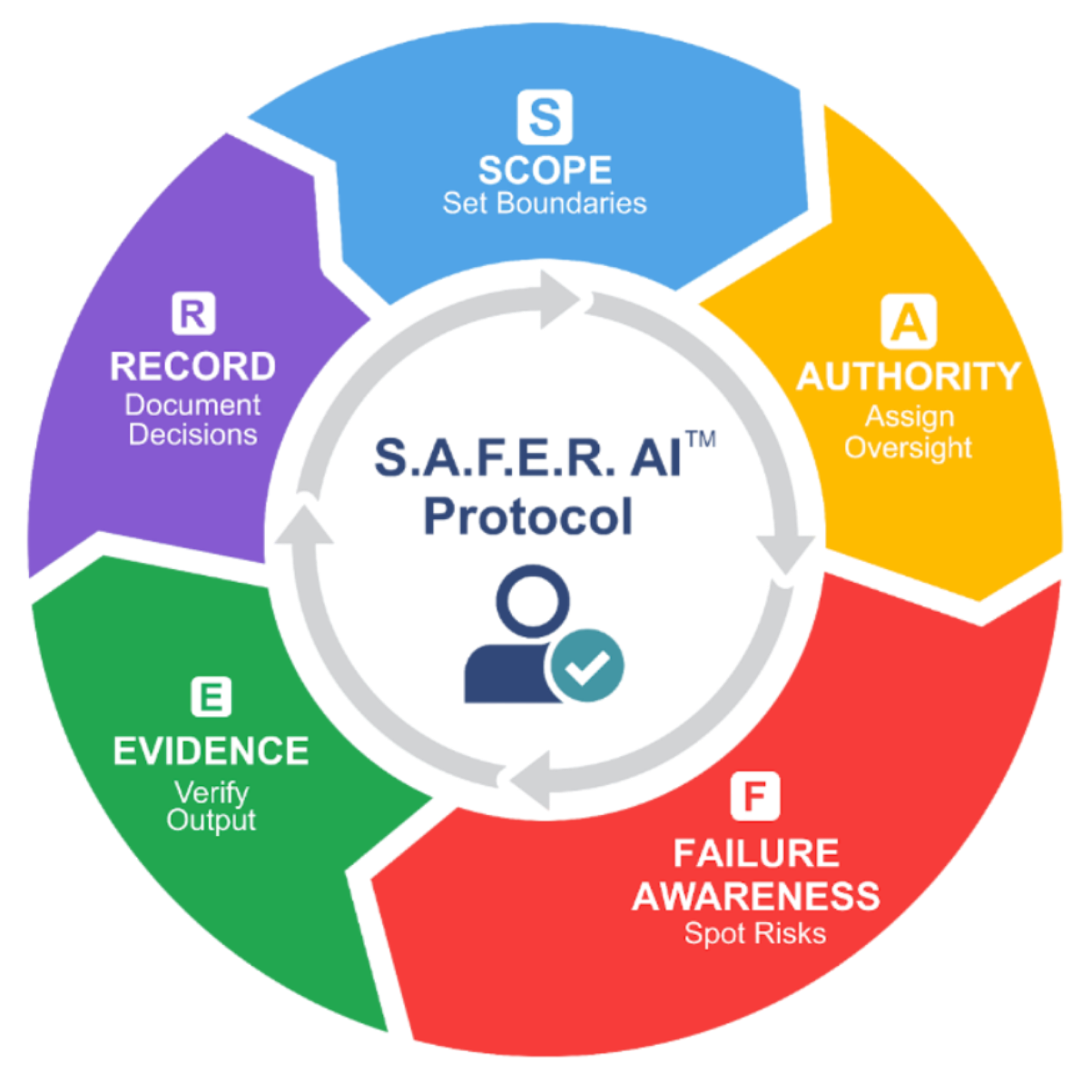

The SAFER AI Protocol™ introduces a structured, human-centered framework designed to guide how individuals and organizations evaluate, verify, and govern AI outputs before they influence decisions.

SAFER AI Framework

The SAFER AI Protocol™ is represented as a continuous evaluation cycle, reinforcing that responsible AI use requires ongoing human judgment, not a one-time validation.

The framework is built on five core stages:

Define the context, boundaries, and appropriateness of AI use.

Establish who is responsible for reviewing and approving AI-generated outputs.

Recognize the limitations of AI systems and the potential consequences of incorrect outputs.

Verify AI-generated information using appropriate validation methods and credible sources.

Document the evaluation process, decision rationale, and outcomes for accountability and transparency.

Why Human Oversight Matters

Artificial intelligence systems are not sources of truth; rather, they are tools that generate outputs based on learned patterns rather than verified knowledge. As a result, relying on AI without structured evaluation introduces significant risks. Inaccurate outputs may be accepted as factual, decisions may be made without proper verification, and accountability for outcomes can become unclear. The SAFER AI Protocol™ reinforces a critical principle: artificial intelligence should support, not replace, human decision-making. Maintaining disciplined human oversight ensures that AI remains a tool for informed judgment rather than an unchecked authority.

About the Framework

The SAFER AI Protocol™ is both a conceptual and operational framework designed to support responsible artificial intelligence governance and human-centered interaction with AI systems. It provides a structured approach to evaluating AI-generated outputs while promoting accountability and critical reasoning. The framework also contributes to AI literacy by equipping individuals and organizations with the skills to assess and verify AI-generated information responsibly. It is intentionally designed to be adaptable across a wide range of industries, including healthcare, finance, education, corporate environments, and public sector governance, making it applicable wherever AI influences decision-making.

Intellectual Property Notice

The SAFER AI Protocol™, including its name, structure, and visual framework, was developed by Dr. Lola Longe. All associated materials, underlying models, and extended governance components are protected intellectual property. Unauthorized reproduction, adaptation, distribution, or commercial use of the framework or its components is strictly prohibited without prior authorization.

Dr. Longe is recognized for her leadership in responsible AI and regularly speaks on AI accountability, education systems, and the future of human-centered technology.

Whether you are advancing faculty expertise, preparing students for AI-enabled environments, or guiding executive decision-making, we provide structured frameworks and strategic support to ensure responsible AI integration.

How Dr. Longe Inspires Change

“Dr. Longe’s ability to connect academic research with practical solutions is unmatched. Our faculty left her workshop energized and equipped with real tools.”

“Her keynote at our conference was thought-provoking and inspiring. Dr. Longe makes AI approachable without losing depth or rigor.”

“We invited Dr. Longe to consult on our AI policy. She balanced ethics, strategy, and innovation in a way that resonated across our leadership team.”

“Dr. Longe guided us through AI adoption with clarity and empathy. She understood our challenges and helped us build a roadmap we could trust.”

“Her ability to simplify complex AI and blockchain concepts is a rare gift. Students left her lecture with both knowledge and confidence.”

“Dr. Longe’s workshop for our executive team was a turning point. She helped us see where AI adds value and where caution is needed.”

“She doesn’t just lecture — she engages. Dr. Longe challenged our assumptions and sparked meaningful discussions across our organization.”

“Brilliant, practical, and ethical — Dr. Longe brings all three to every session. Our MBA students rated her module as the most impactful of the year.”