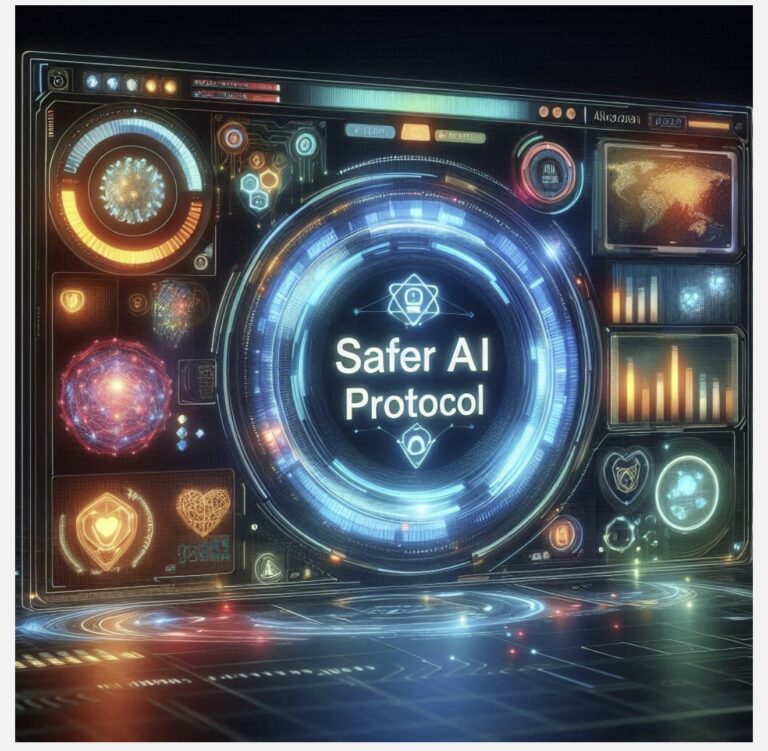

Artificial intelligence is moving beyond simple content generation. Organizations are increasingly adopting systems that support decision-making, automate tasks, and operate with minimal human intervention. This shift creates a significant opportunity, but it also raises the stakes. The question is no longer whether organizations will use AI. The more important question is whether they can do so in a responsible, ethical, and trustworthy way.

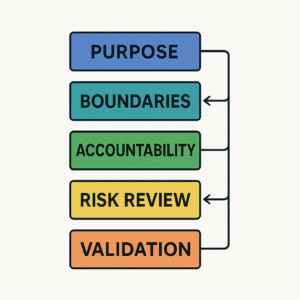

Responsible AI must be treated as a system, not a slogan. It begins with purpose. AI should be introduced to solve a defined problem, strengthen a process, or support a meaningful outcome. When AI is adopted simply because it is available or popular, the result is often weak governance, unclear ownership, and poor implementation.

The next requirement is boundaries. Every AI system should operate within clearly defined limits. Organizations need to determine what the system is permitted to do, what data it can access, and where human judgment must remain firmly in control. Without those boundaries, AI can easily be misapplied in ways that introduce confusion, risk, or harm.

Accountability must follow. AI does not remove human responsibility. Someone must still review outputs, approve critical use cases, and take ownership of outcomes. Responsible AI requires clear decision-makers, escalation paths, and governance structures that define who is responsible when something goes wrong.

A mature, responsible AI approach also includes risk review. That means evaluating bias, privacy exposure, hallucinations, security concerns, and unintended consequences before AI outputs influence real decisions. Organizations that fail to examine these risks early often find themselves responding to problems that could have been prevented.

Validation is equally essential. AI outputs should not be accepted at face value. They should be tested, verified, and reviewed against credible evidence, human expertise, and appropriate standards. Validation is what separates responsible adoption from blind automation.

Monitoring completes the system. AI behavior can shift over time. Outputs may drift, risks may evolve, and misuse may emerge in ways that were not visible at deployment. For that reason, responsible AI cannot be treated as a one-time exercise. It must become an ongoing discipline of oversight, learning, and continuous improvement.

At TrustAIchain, this is the principle that matters most: responsible AI is not about slowing innovation. It is about making innovation sustainable, defensible, and trustworthy. The organizations that will lead in this new era will not simply be the ones that adopt AI quickly. They will be the ones who build systems people can trust.

Dr. Lola Longe is the creator of the SAFER AI™ Protocol, a human-centered framework that helps individuals and organizations govern AI responsibly through clear purpose, defined boundaries, accountability, risk review, validation, and ongoing monitoring.

#ResponsibleAI #AgenticAI #AIGovernance #EthicalAI #TrustworthyAI #HumanCenteredAI #AILeadership #FutureOfAI #DigitalTrust #TrustAIchain